Summary

AI is moving from novelty to normal. U.S. small business adoption is rising, but many firms still hesitate because AI feels irrelevant or intimidating, especially for smaller teams. [1]

When AI is implemented as a workflow (not just a chat window), research shows meaningful productivity gains. A large study of customer-support work found AI assistance increased issues resolved per hour by about 14% on average, with even larger gains for less experienced workers. [2]

The non-obvious part is that “AI without becoming developers” does not mean “AI without engineering.” Once AI touches business data, customers, or automated actions, you need guardrails, governance, and monitoring. NIST’s AI Risk Management Framework emphasizes governance and an iterative risk management cycle across AI use. [3]

If you want AI to drive measurable results (and not create a new category of risk), the fastest path is typically a partner who can design the workflow, connect systems securely, and prove impact with metrics. That is where eLink Design fits.

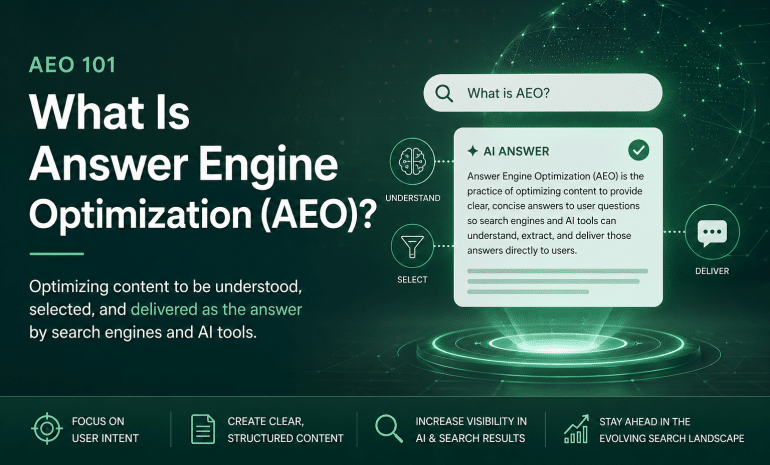

The business case for AI without a dev team

Most businesses do not need a machine learning department. They need sharper processes.

U.S. government research highlights a key reality: many small businesses still believe AI is “not applicable,” and the next most common concerns include lack of knowledge and privacy. [4] That gap is the opportunity. If your competitors automate faster proposals, faster customer responses, and cleaner reporting, they can pressure your margins without changing their product.

Adoption is also climbing. In Census-based analysis summarized by the U.S. Small Business Administration’s Office of Advocacy, small businesses (under 250 employees) using AI rose from about 6.3% to 8.8% in the observed window, and large firms were higher. [5] The same report notes many firms under five employees cite relevance as the reason they are not planning to use AI, emphasizing that the barrier is often perception and confidence, not capability. [4]

Now the punchline: companies that treat AI as “just another tool” often stall. AI becomes valuable when it is implemented as a repeatable workflow that improves a KPI: response time, resolution time, lead speed-to-contact, reporting cycle time, or error rate.

Even the academic evidence supports the workflow view: in a real-world rollout of a generative AI support assistant, researchers observed higher productivity (issues resolved per hour) and improved outcomes for less experienced agents. [2] That pattern matters for small and mid-sized companies: AI can scale your best practices without requiring you to staff up immediately.

AI use cases

This section is intentionally practical. These are common, high-impact AI outcomes that do not require you to “become developers” internally, but do benefit from professional implementation, integration, and safeguards.

| Business area | What AI can do (outcomes) | What eLink implements (non-DIY) | What to measure |

| Customer support and service | Draft replies, surface knowledge articles, summarize tickets, standardize tone | AI-assisted support workflow + curated knowledge base + human-in-the-loop review | Time to first response, tickets per agent, resolution time, CSAT |

| Sales and lead handling | Summarize inbound leads, classify intent, route to the right person, draft follow-ups | Lead intake pipeline + CRM/email integration + tracking and QA | Speed-to-lead, follow-up rate, close rate, quote turnaround |

| Operations and admin | Extract structured data from documents, route approvals, generate internal summaries | Document workflow + rules + audit logging; integrate into your systems | Cycle time per process, error rate, rework rate |

| Analytics and reporting | Turn raw dashboards into plain-language weekly insights; highlight anomalies | Automated reporting layer + governance on data definitions | Reporting time saved, exec adoption, fewer “manual spreadsheet hours” |

| Website and digital experience | Content drafting with brand controls; FAQ automation; conversion support | Website integration, analytics hooks, structured content workflows | Conversion rate, engagement, content velocity |

| Custom software and apps | Add AI features to portals, internal tools, web apps, and mobile apps | Product design + secure AI integration + monitoring | Feature usage, cost per transaction, support load reduction |

There is a misconception floating around: “AI is no-code, so we can wing it.” The first half is sometimes true. The second half is where projects go sideways. Lab metrics tell you what the page can do in a controlled test.

Many modern AI tools let you start with prompts and templates. That is great for initial exploration. But the moment you need any of the following, you are doing engineering work, whether you call it that or not:

- You want AI to use your company’s data safely (policies, pricing, inventory, procedures).

- You want AI output to trigger actions (create tickets, send emails, update records).

- You want consistency (same answer regardless of who asks, time of day, or phrasing).

- You want auditability (what did it say, to whom, and why).

- You want risk controls (privacy, leakage prevention, and safe escalation paths).

This is exactly why NIST frames AI risk management as an organizational discipline, not a software toggle. NIST’s AI RMF describes a set of functions (Govern, Map, Measure, Manage) that help organizations operationalize risk management across AI use. [3]

Translation: business value comes from repeatability. Repeatability comes from design and governance.

If you are evaluating a partner, evaluate their ability to do three things: connect systems securely, define operating rules for AI behavior, and measure outcomes.

The risk reality: privacy, security, and governance are part of the project

AI projects fail in two common ways:

- They never move past experimentation.

- They never move past experimentation.

For decision-makers, the second failure mode is the one that keeps you up at night.

Security threats in real AI deployments are now documented and organized. OWASP’s Top 10 for Large Language Model Applications lists risks like prompt injection, insecure output handling, sensitive information disclosure, excessive agency, and overreliance. [6] You do not need to be a security engineer to understand the message: if an AI system can be tricked into revealing data or taking unintended actions, that is a business risk, not a “tech problem.”

Governance is not a buzzword either. NIST emphasizes governance structures, documentation, monitoring, and organizational accountability as part of trustworthy AI risk management. [7]

Data privacy also depends on which product tier and configuration you use. For example, OpenAI states that for its business offerings and API platform, it does not train models on business data by default, and it describes controls for data retention and access. [8] In practical terms, that means you can choose enterprise-oriented configurations aligned with compliance needs, but you still need a partner who sets it up correctly and defines what data can and cannot be used.

This is a core reason businesses hire eLink: the deliverable is not “AI.” The deliverable is “AI that behaves.”

A measurement strategy that keeps AI honest

If you cannot measure impact, you cannot scale it. And if you cannot scale it, AI becomes a recurring budget line item that nobody trusts.

A sane measurement approach has three steps:

- Establish a baseline. What is your current cycle time, response time, or cost per transaction?

- Run a controlled pilot. Start with one workflow and one department.

- Track outcomes weekly and decide: expand, refine, or stop.

Why this matters: evidence shows AI assistance can boost productivity, but benefit is not evenly distributed. In large-scale research on support work, productivity increased on average, with larger gains among less experienced workers. [2] That implies your measurement should segment results by team, ticket type, and complexity, not just overall volume.

How eLink Design approaches AI projects (and why businesses hire us)

If you want AI to help your business, you have two options:

Option A: a set of disconnected experiments that fade when someone gets busy.

Option B: a system your team can depend on.

eLink’s approach is built for Option B.

What eLink Design delivers (service-focused)

Discovery and prioritization

We identify your highest-leverage workflows: places where speed, consistency, and accuracy matter.

Workflow and data design

We map where data lives, who owns it, what rules apply, and what must never leave a system. This aligns with NIST’s emphasis on governance, context mapping, and iterative risk management. [9]

Implementation and integration

This is where “no developers” becomes “you do not need developers, because we are your developers.” We integrate tools, connect APIs, build secure automation, and implement guardrails informed by known LLM risks like prompt injection and data disclosure. [6]

Testing, rollout, and training

We test for accuracy, failure modes, and safe escalation. We train staff, so the system gets used, not ignored.

Monitoring and improvement

We track the leadership metrics above and evolve the workflow as your business changes.

A Kentucky note: local businesses feel the stakes differently

Kentucky businesses are often competing with larger regional and national players, but with leaner teams and tighter time budgets. That is exactly where AI workflows can matter: faster quotes, faster responses, cleaner reporting, and fewer dropped balls when the office is busy. When your competition is moving faster, “we will get to it someday” is not a strategy. It is an invitation.

eLink is Kentucky-based, works with Kentucky businesses alongside companies in nearly every state in the U.S., and builds practical systems that help local teams do more without pretending they have Silicon Valley headcount.

Learn more about eLink’s background.

Conclusion

If AI feels useful but intimidating, you are not behind. You are normal. The SBA’s research highlights that many small businesses cite relevance and knowledge gaps as reasons for not adopting AI. [4] The advantage goes to companies that move from “we tried it” to “we shipped it.”

If you want AI that integrates with your real systems, respects your data, and produces measurable outcomes, talk to eLink Design.

Andrew Chiles

CEO, eLink Design

Sources:

U.S. Small Business Administration (Office of Advocacy) – AI in Business: Small Firms Closing In (Census BTOS-based)

https://advocacy.sba.gov/wp-content/uploads/2025/09/Research-Spotlight-AI-in-Business-Small-Firms-Closing-In_-092425.pdf

NBER – Generative AI at Work (Brynjolfsson, Li, Raymond)

https://www.nber.org/papers/w31161

NIST – Artificial Intelligence Risk Management Framework (AI RMF 1.0)

https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.100-1.pdf

NIST – AI RMF: Generative Artificial Intelligence Profile (NIST AI 600-1)

https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.600-1.pdf

OWASP – Top 10 for Large Language Model Applications

https://owasp.org/www-project-top-10-for-large-language-model-applications/

OpenAI – Enterprise privacy commitments (business data not used for training by default; controls)

https://openai.com/enterprise-privacy/

Scheduling note: publishing automation and recurring scheduling are outside this research task, but this post is structured to drop into your weekly Monday publication workflow with minimal editing.

[2] Generative AI at Work | NBER

https://www.nber.org/papers/w31161

https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.100-1.pdf

[6] OWASP Top 10 for Large Language Model Applications | OWASP Foundation

https://owasp.org/www-project-top-10-for-large-language-model-applications

[8] Enterprise privacy at OpenAI | OpenAI